CS221 Exam Solutions

CS221

Spring 2019 Name:

| {z }

by writing my name I agree to abide by the honor code

SUNet ID:

Read all of the following information before starting the exam:

• This test has 3 problems printe

...

CS221 Exam Solutions

CS221

Spring 2019 Name:

| {z }

by writing my name I agree to abide by the honor code

SUNet ID:

Read all of the following information before starting the exam:

• This test has 3 problems printed on 32 pages and is worth 180 points total. It is your

responsibility to make sure that you have all of the pages.

• Only the printed (top) side of each page will be scanned so write all your answers on

that side of the paper.

• Keep your answers precise and concise. We may award partial credit so show all your

work clearly and in order.

• Don’t spend too much time on one problem. Read through all the problems carefully

and do the easier ones first. Try to understand the problems intuitively; it really helps

to draw a picture.

• You cannot use any external aids except one double-sided 81 2” x 11” page of notes.

• Good luck!

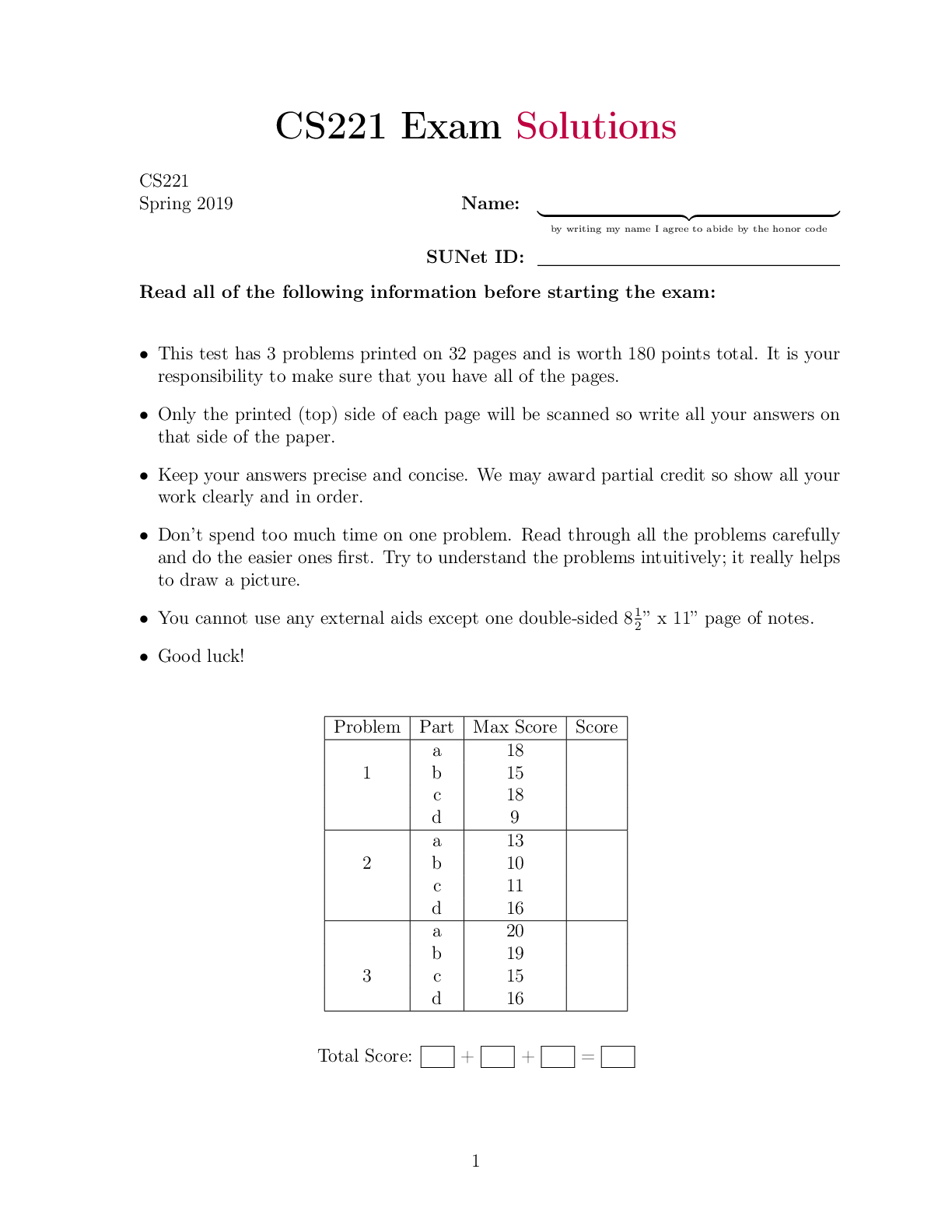

Problem Part Max Score Score

1

a 18

b 15

c 18

d 9

2

a 13

b 10

c 11

d 16

3

a 20

b 19

c 15

d 16

Total Score: + + =

11. Machine Learning (60 points) In an alternate universe, the course staff of CS 221

does not release the mechanism by which final grades are computed. As an enterprising

student, you decide to procrastinate by trying to predict final course grades.

You have obtained access to data points (x; y) for each person, where x 2 R6 gives 6

pieces of information about each student’s grades in previous classes and progress so far in

CS 221 (you don’t need to know what those are). You wish to design a model that predicts

the student’s final grade: A, B, C, D, or F.

(a) Binary classification. (18 points, 6 points each)

You start by trying to solve a simpler problem: only using the data x(i) = (x( 1i); x(2i))T 2

R2 for each person i in the historical database, you wish to predict whether student i

passes the class, label z. Thus, z = 1 when the student’s final grade is in fA; B; Cg

and they pass, and z = −1 when the student receives a fD; Fg and thus NC (no credit)

for the class. You have N training examples, x(1); : : : ; x(N) (viewing them in R2) with

associated pass/fail labels z(1); : : : ; z(N) 2 f−1; 1g.

(i) Before applying a machine learning algorithm, you plot x(1); : : : x(N) and observe

the following, where circle dots have label z = 1 and star dots have label z = −1:

Based on this plot, give an expression for one additional polynomial feature φ(x(i)),

you would add to the vector x(i) to train on, that would likely improve the performance of a machine learning model that used a linear predictor, so x(i) :=

(x( 1i); x( 2i); φ(x(i)))T.

Solution x2

1=49 + x2 2=9

2(ii) After playing around with the data, you realize you have many poorly classified

points where z and your prediction z^ = θT x are far from each other. Thus, you

wish to penalize large losses linearly, but wish to use a squared loss for non-outlier

data points. Given this background, construct a continuous, differentiable, loss

function ‘1 in the piecewise format below:

‘1(z; zb) = (? ? j otherwise z − zbj < 1

What loss function L(θ) (with respect to the penalty ‘1) do you minimize to find

the optimal predictor θ ?

Solution L(θ) = PN i=1 ‘1(z(i); θ>x(i)) where

‘1(z; zb) = (j12z(z−−zbj − zb)2 1 2 j otherwise z − zbj < 1

3(iii) With a step size of η = 0:1 and θ initialized to all zeros, manually compute one

step of a training update using stochastic gradient descent if you randomly select

data point x(1) = (0:9; 0:5); z(1) = 1 (you do not need to use the feature from (i)).

Solution We compute the gradient of ‘1(z(j); θ>x(j)) with respect to θ as

r‘1(z(j); θ>x(j)) = (− −x x( (j j) ) · · ( sign z(j)(−z(jθ)>−x(θjT))x(j)) j otherwise z(j) − θ>x(j)j < 1 :

Then we update θ := θ − η · r‘1(z(j); θ>x(j)), so θ = (0:09; 0:05)

[Show More]